You Did Not Delegate to Your Agent. You Abandoned It.

Delegation is not a trust problem. It is a specification problem.

Priya runs a fourteen-person design studio in Toronto. Last Tuesday at 9:14 PM she forwarded an email to her operations manager with one line: “Can you handle this?” The email contained a client request for a revised project timeline, a budget question, and an implicit complaint about response times. Her operations manager responded the next morning. He answered the budget question, missed the timeline request, and did not register the complaint at all.

Priya did not have a trust problem. She had a specification problem. She gave a competent person a task with no structure, no context about what mattered, and no definition of what “handled” meant. He did what any reasonable person would do: he answered the easiest question and moved on.

This is exactly what most founders do with AI agents. They call it delegation. It is actually abandonment.

The Conventional Argument

The conversation around AI delegation follows a predictable script. On one side: agents are not ready. They hallucinate. They lack judgment. You cannot hand them anything consequential because they will get it wrong and you will not catch it in time. On the other side: agents are already better than junior employees. Just set them loose and review the output.

Both sides share the same assumption. Delegation is primarily a question of capability. Can the agent do the work? Is it smart enough? Reliable enough?

This framing is not wrong. It is incomplete.

The Dismantle

Think about the last time you delegated something important to a human. Not a task — a function. Accounting. Customer support. Content production. Social media.

You did not hand them the job and walk away. You gave them:

a definition of what good looks like

the metrics you would use to know if it was working

the boundaries of what they could decide without asking

the context they would need to make those decisions well

a cadence for checking in

When the function failed, you almost never fired the person first. You fixed the specification first. You realized you had not told them what you actually meant by “handle this.”

AI agents fail for the same reason. Not because they lack capability. Because the founder never built the operating surface the agent needs to do the job.

The first five posts of The Compounding Founder were written by hand between February and March 2026. They followed no documented system — the voice existed in my head, the structure was improvised per post, and the distribution happened when I remembered to open Substack The posts were good. They were also unrepeatable. If I got sick for a week, the newsletter stopped. If I changed one thing about the writing process, there was no artifact to update — just a vague sense that the last post felt different from the one before it.

In the first week of April, I built the specification layer: a voice system with seven traits and thirty banned words, a content structure template, a distribution config, an asset strategy, and a scorecard. Six artifacts where there had been zero. Then I handed the content function to an agent.

The specification took about four hours to build. The agent produced a full draft, seven X posts, two LinkedIn posts, seven Substack Notes, and a paid deliverable template in a single run. The draft scored 88% on the voice system — above the 85% threshold for human review, below the 90% target. The gap was data density: not enough hard numbers. That is a specific, improvable failure. Not “the agent is not ready.” The specification was not complete enough.

The Core Idea

Delegation without specification is not delegation. It is abandonment with a feedback delay.

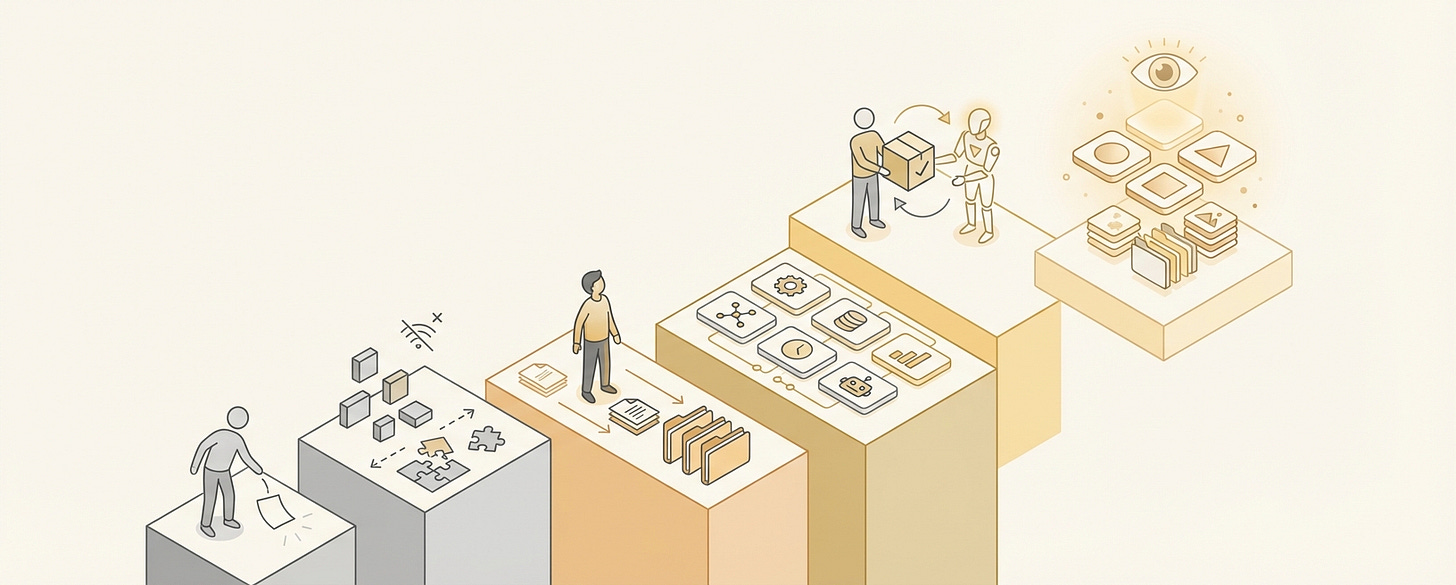

1. The Specification Stack

Every function you delegate, to a human or an agent, requires four layers of specification. Miss one and the output degrades predictably.

Layer 1: Identity. What is this function? What business does it serve? Who is the audience? This is not a mission statement. It is the context that prevents drift. Without it, the agent optimizes locally, each individual output looks fine, but the collection has no coherence.

Layer 2: Standards. What does good look like? Not “high quality” — that means nothing. Specific, auditable criteria. For a newsletter, this means: voice rules, banned vocabulary, structural templates, sentence-level patterns. For customer support, this means: response time targets, escalation rules, tone guidelines, resolution definitions.

Layer 3: Boundaries. What can the agent decide? What requires a human? This is where most founders fail. They either restrict everything (making the agent useless) or restrict nothing (making the agent dangerous). The answer is a decision map: these categories are autonomous, these require review, these are human-only.

Layer 4: Feedback. How does the agent know if it is working? Not “the human checks sometimes.” A structured evaluation loop where output is scored against the standards from Layer 2, and the scores route back into the next cycle.

Miss Layer 1 and you get drift. Miss Layer 2 and you get mediocrity. Miss Layer 3 and you get either paralysis or chaos. Miss Layer 4 and you get all three, slowly, without noticing.

2. Why “Just Review the Output” Fails

The most common delegation pattern I see: give the agent the work, review everything it produces, fix what is wrong. This feels responsible. It is actually the worst of both worlds.

You have not saved time because you are reviewing everything. You have not improved quality because your corrections do not feed back into the system. And you have trained yourself to distrust the agent, which means you review more carefully over time, not less. The cost of the agent stays constant. The cost of your attention increases.

Review-everything is not delegation. It is supervision cosplaying as leverage.

The alternative is to build the evaluation into the system. Define the rubric. Score the output before it reaches you. Route only the failures to human review.

This is how any review-heavy function improves. The first operator run of The Compounding Founder included a manual evaluation step: the agent scanned its own draft for banned words, checked structural patterns, and scored voice trait compliance one by one. That evaluation took the full output of the run. By the time the taxonomist agent within the agent control plane saw the failure report, a programmatic content scorer had been scoped, built, and activated, designed to do in seconds what the manual scan did in paragraphs. The review bottleneck did not disappear because the agent got smarter. It disappeared because the evaluation became an artifact, not a task.

3. The Artifact Test

Here is a practical test for whether you have actually delegated a function or just abandoned it.

Open the folder, document, or system where the agent does its work. Count the artifacts. Not the outputs — the inputs. The things you created to tell the agent how to operate.

If the count is zero or one, you have not delegated. You have assigned a task and hoped.

A properly delegated function should have:

an identity document (what this function is, who it serves)

a scorecard (what metrics matter, what the targets are)

a standards artifact (what good looks like, specifically)

a structure artifact (the default patterns and templates)

a boundary map (what the agent decides, what it escalates)

a feedback loop (how output is evaluated and how evaluations route back)

Six artifacts minimum. Most founders I talk to have zero. They have a prompt and a prayer.

The number of artifacts is not bureaucracy. It is the surface area of your specification. Less surface area means more drift, more review, more frustration, and eventually more abandonment disguised as a conclusion that “agents are not ready.”

4. Delegation as Architecture

The uncomfortable implication is that delegation is not a soft skill. It is architecture.

When you delegate accounting to a bookkeeper, you give them a chart of accounts, a set of categorization rules, access to the bank feeds, and a monthly review cadence. You do not give them your bank login and say “handle the money.” The chart of accounts is an artifact. The categorization rules are an artifact. The review cadence is a feedback loop. You built an operating surface without thinking about it because accounting has had centuries to develop the specification layer.

AI agent delegation is new enough that the specification layer does not exist yet for most functions. So founders skip it and blame the agent when the output is wrong.

Building the specification layer feels slow. It feels like overhead. It feels like the opposite of the speed advantage agents are supposed to provide. But the math is simple. Two hours building a voice system saves twenty hours of post-by-post corrections over the next quarter. One hour defining a decision boundary map prevents the three-day crisis when the agent makes the wrong call on something you never told it was sensitive.

The founders who get the most from agents are not the ones with the best prompts. They are the ones who spent the most time on the specification layer before the agent started working.

5. What This Looks Like in Practice

I’ve been publishing The Compounding Founder for just a couple months, five posts, all written by hand, each one taking a full day from outline to distribution. In the first week of April, I stopped writing and started specifying. I built the voice system, the content structure, the distribution config, the asset strategy, and the scorecard. Six artifacts. Four hours of specification work.

Then I handed the content function to an agent.

This post is the output of that handoff. An agent read the specification layer, selected the topic based on gap analysis of the first five posts, wrote the draft, produced the share assets, scored itself against the voice system, and filed a failure report listing ten things it could not do.

That sentence should create discomfort. It creates discomfort here too. But the discomfort is the point. The question is not whether an agent can write a newsletter post. The question is whether the specification layer is precise enough that the output meets the standard and whether the evaluation loop is honest enough to catch it when it does not.

The first draft scored 88% on voice compliance. The gap was data density, not enough hard numbers that hit the chest. That is not “the agent failed.” That is “the specification needs one more layer.” The system improves by improving the specification, not by hoping for a better model.

If this changed how you think about delegation, reply with your current setup — I will tell you which artifacts are missing. If you are not subscribed, this is what we do here every week: one operating principle, one framework, one deliverable that makes the idea real.

The insight is free. The specification stack I use to delegate an entire business function to an agent, including the six artifacts, the decision boundary template, and the evaluation rubric, is below.